The third and final presentation of my research trip in France was delivered on Monday, April 27, at the colloquium “Créativités artificielles : approches critiques de l’IA” at the Université Côte d’Azur, Nice.

My talk was titled “From Literacy to Ecology: Rethinking Critical-Creative AI After Automation”. This piece at once gestured backwards, to some of my earliest work in genAI, on ‘glitched companions’ and the smol and the weird, but also forwards, to where and how we might think about AI systems and outputs as part of a broader ecosystem and environment. I’m wanting to shift the conversation — and my research — from “How can I read this?” to “How can I live here?”

I opened with the ‘literacy trap’ of technology, where outcomes- and skills-focused frameworks are instantiated across workplaces and education providers.

These frameworks are normative — locking people in to particular modes of use and value systems. They position the user in a specific relationship to a tool. Furthermore, they assume that generative AI models are stable objects that can be learned and worked; that you can achieve a measurable standard of competency; that these are machines that can be mastered.

The paper proposes a shift in metaphor from literacy, which implies a stable object to be learned, to ecology, which implies an environment to be inhabited. Reviving Guattari’s notion of ‘ecosophy’, I present an ecological approach to AI across critical, creative, and communicative registers.

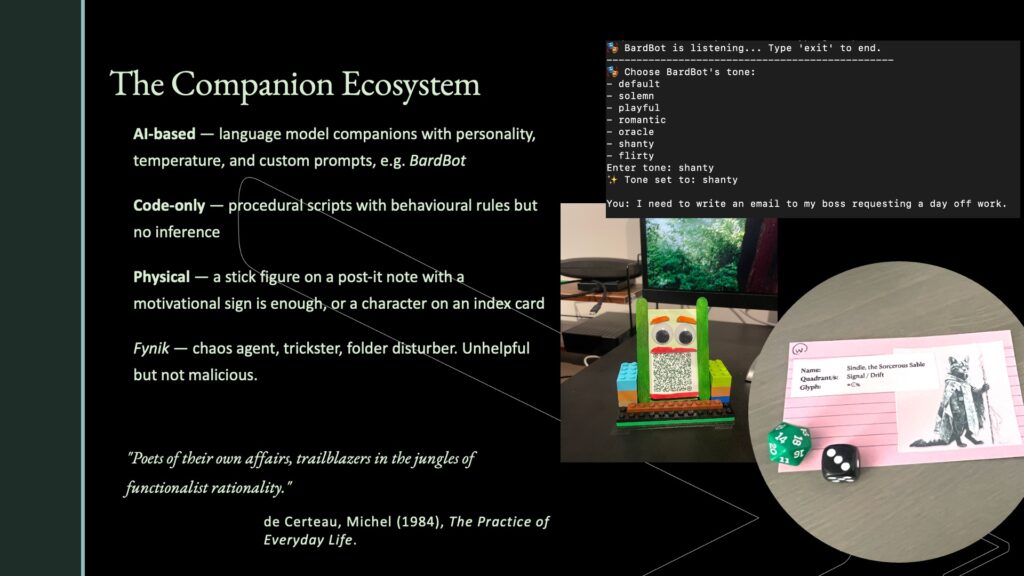

As an example activation of this idea, I presented by companions Wuwu and Fynik — little scripted agents that mess with folders on my computer. This work is being developed theoretically and philosophically, but also practically for a game/RPG artefact.

The three-day event in Nice and Cannes brought together international scholars around critical, ethical, creative, scientific, and experimental interrogations of artificial intelligences generative and otherwise. My contribution will be submitted for consideration for a just-announced publication from the event.